Let Your AI Agent Argue With Itself

A simple adversarial pattern that catches bad plans before they waste hours of execution.

TL;DR

Coding agents like Claude Code support subagents—AI assistants that spin up on demand with their own fresh context, separate from your main conversation

When you’re building something and keep switching approaches—building, hitting friction, starting over—that’s usually a process problem, not a technical one

Spawning adversarial subagents pressure-tests your plan before you commit—like a pre-mortem, but independent

One prompt (“spawn 3 adversarial subagents to review this plan”) is all it takes; no setup, no configuration

I was building an ambitious side project, where you sit down excited, and two hours later you’ve made three decisions, built on the first one, realized it was wrong, and started over.

That loop—pick an approach, build it, hit friction, discard it, pick another—is a specific kind of miserable.

By the third iteration I was doing that thing Raymond Hettinger talks about—sitting back and thinking: there must be a better way.

The Four-Word Fix

Midway through that session, I typed something into Claude Code I had tried before, but in a different context:

“spawn 3 adversarial subagents to review this plan.”

The agents came back with critiques that exposed gaps I didn’t know existed—technologies I wasn’t deeply familiar with, assumptions I hadn’t questioned. I did 2 more rounds. By the end, I had better questions than I’d started with, and a plan I felt more confident in.

It reminded me of running a pre-mortem. Before committing to a direction, you pressure-test it. Except you’re not imagining failure yourself—you’re getting independent perspectives from agents with no stake in the decisions you just made.

What Are Subagents?

A quick note if you’re not already using a coding agent like Claude Code, Codex, OpenCode, or Cursor CLI: this pattern only works there (as far as I have tested so far). These tools support subagents—AI assistants you can spin up on demand for a specific job. Each one runs in its own fresh context window, separate from your main conversation. Think of them as colleagues who show up, do their work, and report back.

If you want a deeper primer, Philipp Schmid’s The Rise of Subagents is a good starting point, or you can check out this short video.

If you’re still using a chat interface for coding, you’re leaving a lot on the table. Coding agents give you a class of power-user features—subagents being one of them—that simply don’t exist in chat.

The Maker-Checker Model

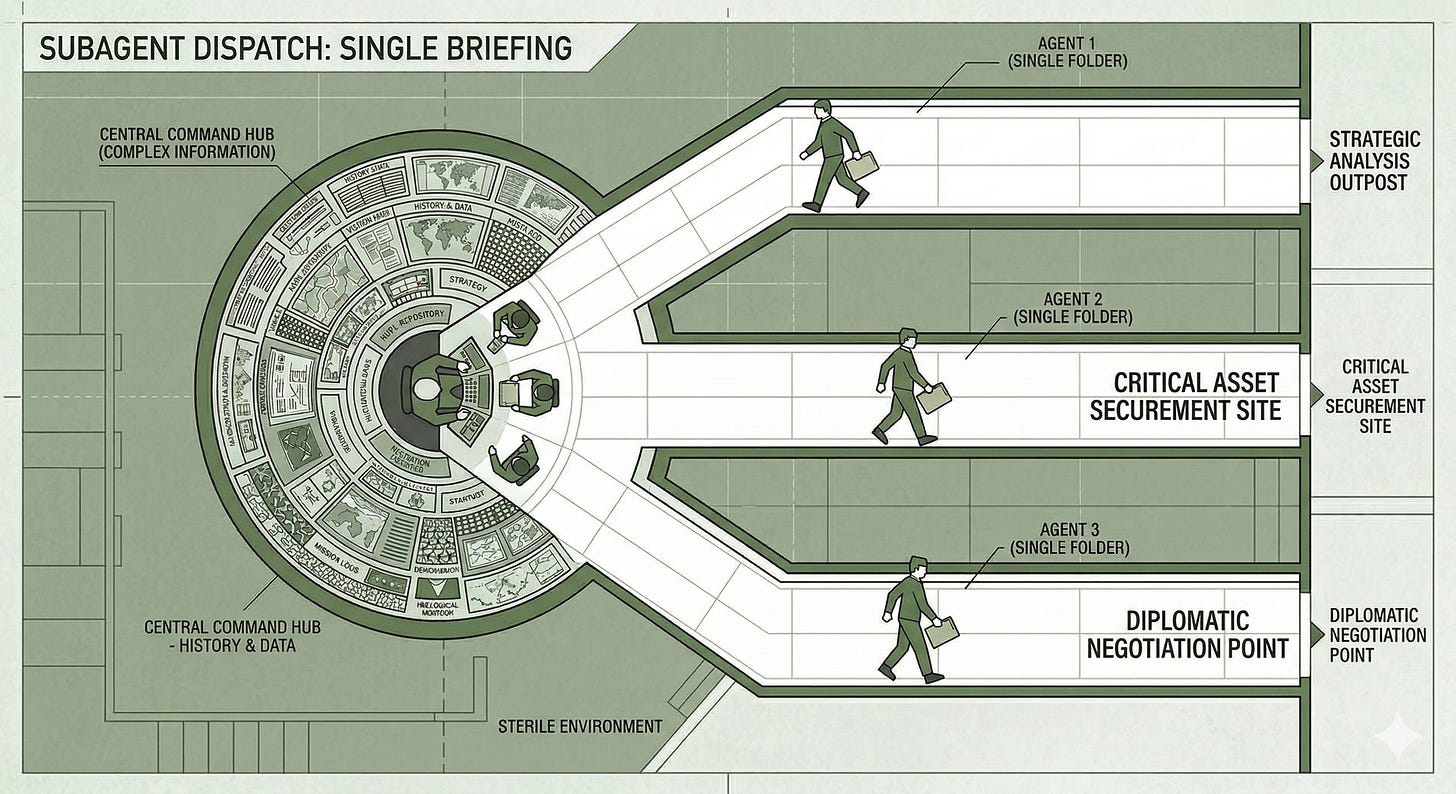

OK, so now back to my original problem. There’s a name for what’s happening here: the maker-checker model.

The idea: split a task between someone who builds and someone who critiques. Different jobs—one builds, one questions—but a shared goal. In financial systems, no transaction goes through without an independent check. The same logic applies here.

When you spawn an adversarial subagent, it starts with a completely fresh context window. It hasn’t spent the last hour agreeing with your decisions. It only gets the seed prompt—a summary of what you’re trying to do—and nothing else. No accumulated bias. No sycophancy from 50 turns of “yes, let’s go with that.”

That freshness is what makes the critique reliable. And you don’t even read it directly—your main agent synthesizes it first, filters the noise, and presents what matters.

The critique does two things—first, it gives you perspectives you can push back on. You’re not just reading feedback—you’re using it as a jumping-off point to ask better questions, dig into gaps in your own understanding, and pressure-test assumptions you didn’t know you were making. LLMs carry a lot of knowledge. Adversarial subagents are a way to tap into it.

Second, it narrows your uncertainty. You still don’t know what you don’t know—that doesn’t change. But something independent reviewed your plan, and the gap between where you are and where you want to go feels smaller. That’s worth a lot, especially when you’re building in territory you’re not fully familiar with.

Beyond Planning

I use this pattern beyond planning. After writing code, I’ll spawn one to find gaps in the implementation. Finishing a blog post? Same thing—flow, structure, readability. The specific task changes. The prompt barely does.

How many agents to spawn? I usually say 3, sometimes 1. Honestly, I don’t have a formula. It’s still arbitrary. But even a single adversarial pass catches things you’d otherwise miss.

Just Say the Words

I try this every time I’m stuck on a plan or unsure about an implementation:

Spawn 3 adversarial subagents to review your plan.

That’s it—no setup, no special workflow. Just say the words. I’ve made it a habit, and it’s one of those small shifts that makes the uncertainty feel a little more manageable.

If you want to follow along beyond the blog, find me on Twitter/X at @dhruvbaldawa. I’d love to hear your thoughts — what topics around AI and developer productivity are you most curious about?