Notes on Building with AI: February 2026

What I noticed when I stopped writing code

TL;DR

The workflow got simpler in February, not more complex—same results, less overhead

The workflow is a dial: two modes depending on how much you know about the problem

~100% of my code is now AI-written—not the goal, just a byproduct of getting the process right

Planning takes more time now—I re-engage at verification

AI agents are unpredictable, and once you feel close to done, stopping is harder than it sounds

If you love writing code, this shift won’t sit well. If you love building things, it’s the best time to do it.

Every month, I expected the workflow to get more complex. In February, it got simpler.

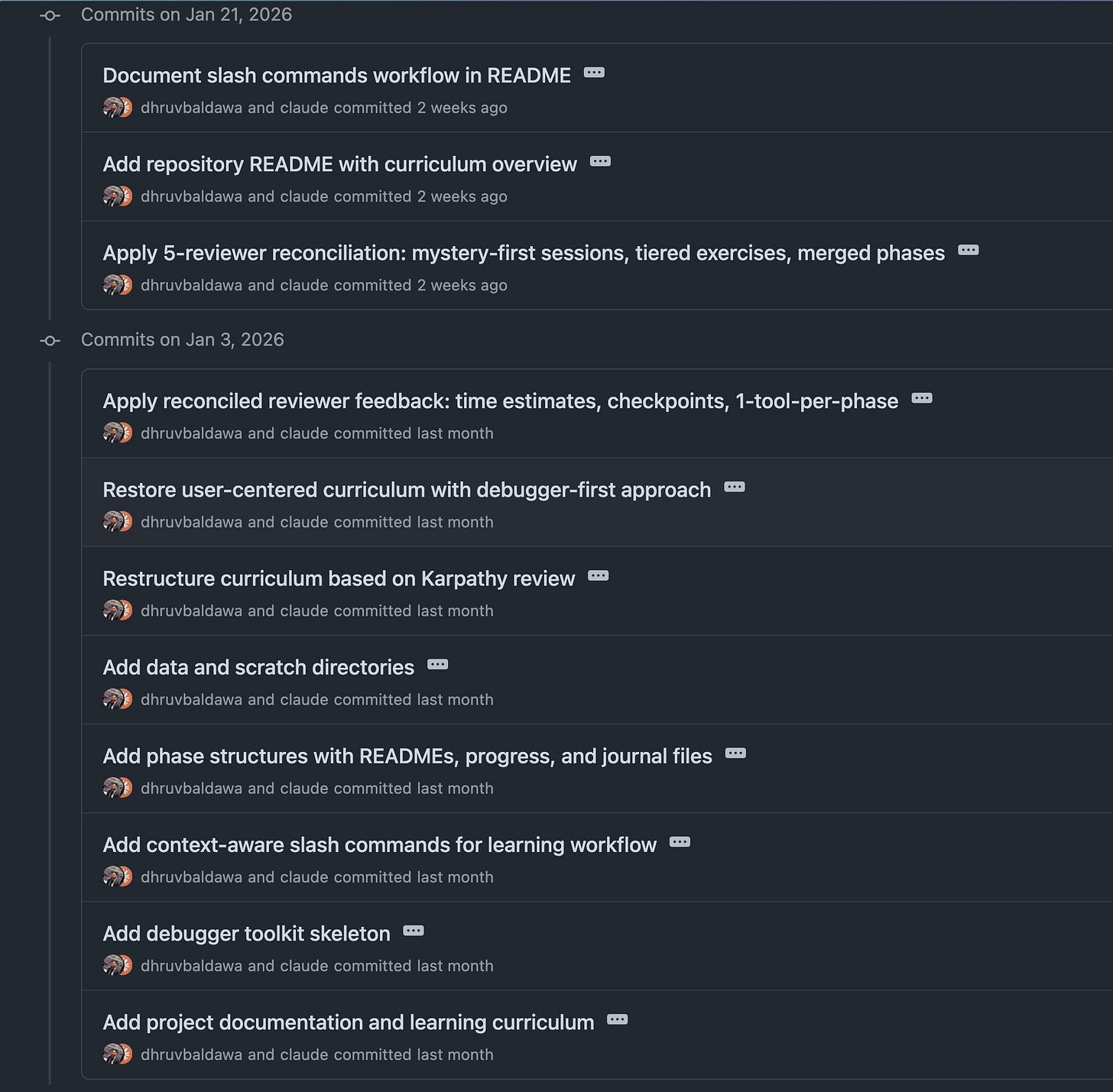

October was about adding structure—a 4-phase workflow, two custom prompts, spec-driven development. January was about adding infrastructure, including review agents, sandboxing, and a Discord integration that pinged me when something needed attention. Each month, more pieces.

So I expected February to be more of the same. Instead, I ended up doing less. The models improved, and Claude Code’s native features matured. I spent less time managing the workflow and more time building things. And the output—the quality, the speed, the ambition of what I was attempting—went up.

The Workflow Is a Dial

The thing I kept adjusting in February wasn’t which tool to use. It was about how much control to hand over.

Most projects fall between the spectrum of “I know exactly how to do this” and “Not really sure what I want”. So, the workflow has two modes.

When I know what I’m building, I go heavy on upfront planning—a detailed commit plan, validation criteria for each task, and a review cycle baked in—and then step back. The automated workflow handles quality from there. I’m not reviewing every commit manually; the process is the safeguard. This is where the ~100% AI-written code comes from. Not because I’m hands-off, but because I’ve invested upfront with detailed planning.

When I don’t know what I’m building, I slow down. The focus shifts to uncovering unknowns before writing a single line of code. This is where I use what I think of as adversarial planning—sub-agents whose job is to find problems in the plan before I commit to it. I spawn sub-agents in the same Claude Code session and ask them to tear the plan apart. What could go wrong? What’s fragile? What assumptions am I making that might not hold?

These agents are deliberately aggressive. The goal isn’t to validate the plan—it’s to surface every risk I’m not thinking about, before I’ve spent tokens and time going down the wrong path.

For longer, more complicated projects, I use a technical-planning skill I’ve built that makes risk-surfacing a formal part of the planning phase—so the hard problems get solved first, not discovered halfway through.

Once the unknowns are known, I switch back to the first mode and let the workflow run.

Trusting the Process

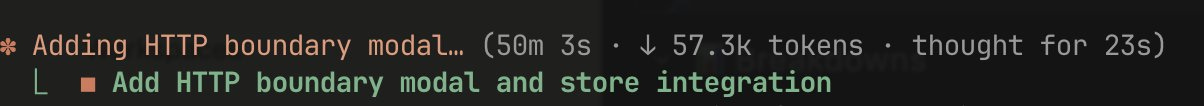

I’d kick off a commit, walk away, and come back 45 minutes later to find it still running. Not because it was large. I run 3 agents per commit—one implements, 2 review—and the guideline is extremely clear: quality over speed. The implementer writes the code, reviewers find problems, and it goes back for revisions. Three or four rounds sometimes.

45 minutes for a small commit sounds absurd. But watching it play out built more confidence than double-checking my own work ever did. The mistakes that would have slipped through my tired eyes at 11pm weren’t slipping through.

I’m spending more time on planning now—commit sequence, validation criteria, test coverage—then stepping back. I check in at testing, not implementation.

Remove the Pain, Remove the Overthinking

I started more projects in February than any other month. Also finished more of them.

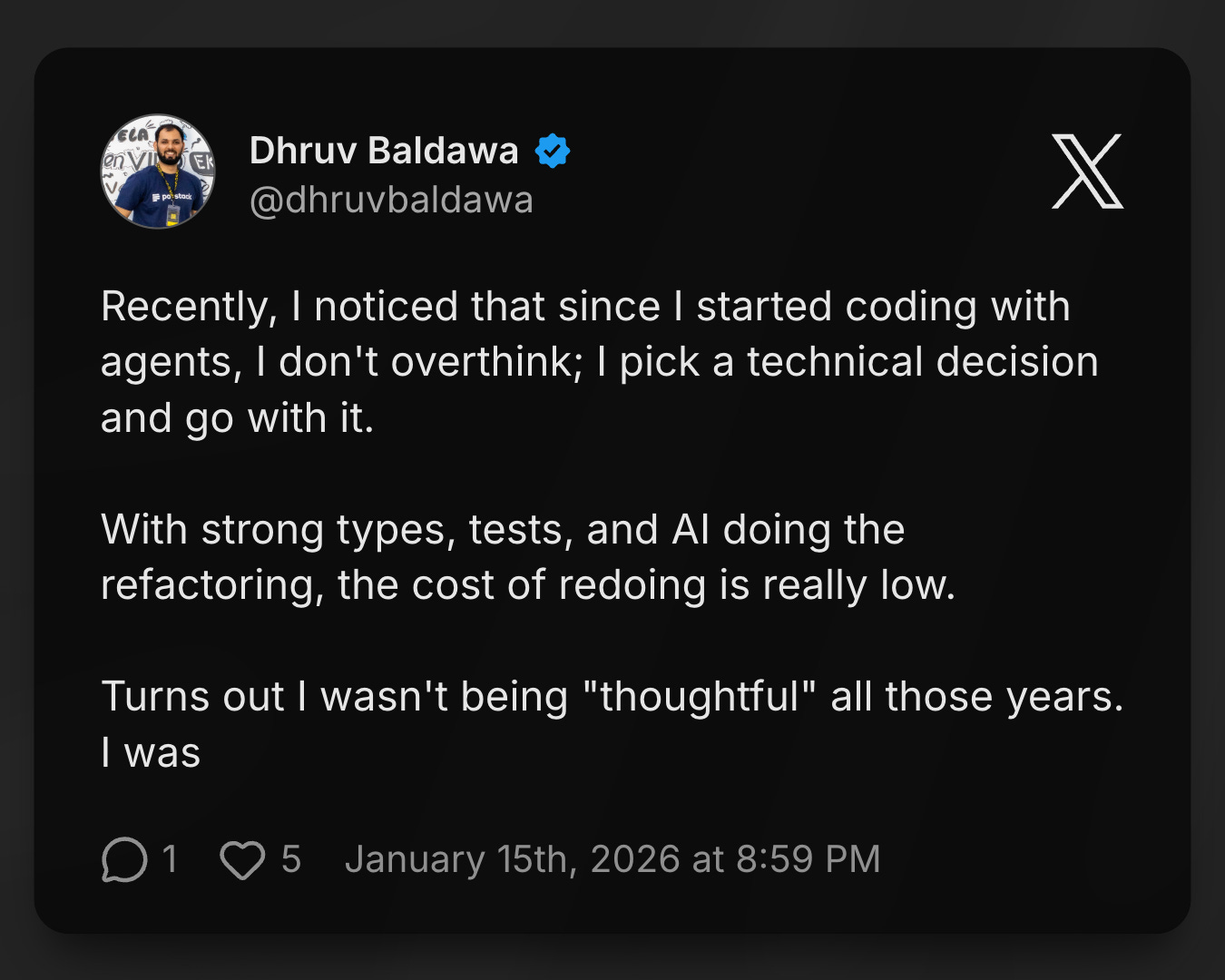

Something shifted around overthinking. I used to sit with technical decisions — weigh options, sometimes not start at all. With AI doing the implementation and refactoring cheap, the cost of picking wrong and redoing is near-zero. Turns out I wasn’t being thoughtful all those years. I was just avoiding the pain of changing course. Remove the pain, remove the overthinking.

So I’d just start. In two days, I had a codebase visualizer — something that shows how parts of a system connect, high-level structure with drill-down when you need it. It compressed 7-8 days of research into a few hours. Didn’t plan it out upfront. Started with a rough idea and iterated as my needs became clearer.

My relationship with bottlenecks changed too. Before, a bottleneck was just something to push through. Now I have two options — move fast through it, or build a tool that removes it for next time. The cost of building the tool is low enough that it’s often worth it. Either way, friction comes down, ambition goes up

The Cost of Constant Momentum

First: non-determinism. AI agents are not predictable. A complicated project might take twenty minutes. A simple quality fix might run for hours. I never know what I’m going to get.

What makes it hard to stop is proximity. I had 20 tests failing. Now I have 7. It’s 30 minutes before the end of my day, and I feel close. 10 minutes later, I’m at 1. Half an hour after I was supposed to stop, I’m still there—one failing test, one more prompt, maybe this one. I can see the finish line. That’s what keeps me going. It’s motivating in a way that’s hard to distinguish from just being hooked.

Second: fatigue. I’ve been parallelizing a lot—when an agent is working, I’m working too, looking at other tasks, starting other things. I can sustain that for a while. But the mental fatigue compounds.

Coder or Builder

Not everyone is going to like where this is heading.

If what you love is writing code—the craft, the syntax, the satisfaction of a well-constructed function—the direction AI is heading won’t sit well with you. That work is being absorbed: slowly, then all at once.

But if what you love is building things—seeing an idea become something real that works in the world—this is probably the best moment to be doing this. AI removes the tedious stretch between having an idea and having something that runs. The part that used to take weeks now takes days. Sometimes hours.

I think about the printing press. If you had ideas, the bottleneck was never the ideas—it was how fast you could physically produce the book. The printing press removed that constraint. Suddenly, the speed of spreading an idea wasn’t limited by how fast a human could copy it.

AI does the same thing for software. The bottleneck was never the ideas—it was how fast a human could implement them. Remove that constraint, and the question changes. It’s no longer ‘can I build this?’ It’s ‘what do I want to build?’

What Made It Stick

I shipped a lot in February. The thing that surprised me was how much of it felt effortless.

The models helped. The platform helped. But the real change was knowing how to work—and trusting that enough to step back.

In the next post, I’ll get into the exact configuration: an implementer agent and a reviewer agent per commit, atomic commits with validation criteria, and how it’s all encoded in CLAUDE.md. If this post was the what, that one is the how.

Edit: You can check the next post out here

If you want to follow along beyond the blog, find me on Twitter/X at @dhruvbaldawa. I’d love to hear your thoughts — what topics around AI and developer productivity are you most curious about?